Theories regarding the “cause” of autism abound. In the 1960s much was made of the psychoanalytic notion that bad parents, especially “refrigerator mothers”, could “cause” autism in their children by disturbing them emotionally. This theory became dominant across the West – leading to children being cruelly ripped away from their guilt-ridden mothers – until the late 1970s, when the combined might of increasingly fierce parent advocacy groups, and growing scientific consensus, pushed it to the hazy fringes of psychiatric discourse.

Since the 1990s there has been increased talk of an “epidemic”, and blame has alternatively been scapegoated on vaccines. This theory was first espoused by British researcher Andrew Wakefield, and it caused quite a stir. Parents stopped vaccinating their children, leading to outbreaks of disease and sickness worldwide. Nonetheless, as with the refrigerator mother theory, a scientific consensus emerged that there was, despite incessant searching, no evidence whatsoever to support this hypothesis. In fact, and although the myth it is still periodically dredged up by uninformed celebrities such as Donald Trump, Wakefield has now been exposed as a fraud, and struck off the British Medical Council.

By contrast, more reputable researchers have tended to focus on twins, genetics, epigenetics, and other various environmental factors. From the 1970s onward, for example, twin studies indicated that autism was probably inborn and hereditary. Since then, there has been an incessant search for so-called “risk” genes, as well as research on genomic imprinting and environmental factors, in order to explain the “cause” of autism – which itself is now seen more as a neurocognitive style, accompanied by varying levels of disability, rather than as an emotional disturbance.

In line with this, much has also been made of the fact that most of those diagnosed have been boys, which scientists now often take to indicate some kind of inherent link between autism and the male sex. Professor Simon Baron-Cohen, for example, characterises autism in terms of the “extreme male brain”. This is something he associates with rational, systematic thinking coupled with a lack of social understanding, which he hypothesises to stem from an overload of “male” hormones in the womb during pregnancy. Similarly, Dr Christopher Badcock suggests that autism may be a “hyper-mechanistic” kind of mind that stems from paternal genomic imprinting, again linking autism to the male sex.

Nonetheless, these biologised and sexed ways of understanding autism are, ultimately, unconvincing as well. On the one hand, there are multiple problems with the purported association between autism biological maleness. First, it seems to conflate sex (more biological) with gender (more cultural) in a dogmatic and problematic way, without taking into account the highly complex way in which the two interact. Second, even though people on the autism spectrum do tend to have these purportedly “masculine” traits, they also tend to lack many other traits associated with masculinity, for example, being good at sport and banter. Third, even though autism has, historically, been diagnosed mostly in buys and men, there is now growing awareness that many girls and women also share the cognitive traits associated with the condition, further problematising the assumed association between autism and men in a neurobiological sense.

In turn, beyond these gender troubles, when we review the scientific literature as a whole, things begin to seem a lot more complicated in regards to the purported biological essence of the condition as such. In fact, what the research findings have shown is that over a thousand genes, alongside a huge range of environmental factors – ranging from one’s proximity to busy roads to the age of the mother during pregnancy – seem to increase the chance of tending towards the separate cognitive and behavioural traits associated with the condition. But, crucially, the condition as a whole has no single cause, or even a range of combined causes. Similarly too, there is no neurological essence of the condition: despite systematically misleading reporting by enthusiastic science journalists, the differences seem be unique in each case, and studies reporting to find some neurological unity are rarely replicated.

What this indicates, as Professor Lynn Waterhouse argues in her 2013 book Re-thinking Autism, it that the fundamental error guiding our understanding of autism is that it really is one biological thing, or even “spectrum” of things. ‘Autism’, she writes, ‘is not one disorder or many “Autisms” but is a set of symptoms. The heterogeneity and associated disorders suggest that autism symptoms, like fever, […] signal a wide range of underlying disorders’. Given this (and putting aside her problematically pathologising vocabulary) talk regarding the “cause” of autism as such, when thought of in terms of a physical cause, doesn’t really make much sense (and this is so even though each single case might have its own disparate physical cause). The question, in other words, is driven by a fundamental unsupported assumption: that autism is a natural category, like “gold” or “mammal”, rather than social category, like “black” or “female”.

In contrast to these dominant biologised approaches, then, my own concern with the autism epidemic’s “cause” lies elsewhere – beyond the biomedical, and towards the normative. In fact, we should really be more interested in the social causes of our categorisation of autism (including those which have since caused that category to broaden and change), rather than the biological underpinnings of the traits we associate with autism in any given single case. For, once we accept that autism has no unified physical essence, this seems to be to be the most valid way of taking about what “caused” autism to come into being as a distinct human kind, and then to expand into a broad “spectrum” – or, indeed, “epidemic”.

When looked at from this angle, the first thing to note is that psychiatrists don’t just go around medicalising people at random. Rather, as the pioneering psychiatrist Karl Jaspers noted in his 1913 book General Psychopathology: ‘What is “ill” depends less on the judgement of the doctor than on the judgement of the patient and on the dominant views in any given cultural circle’. In other words, psychiatrists end up medicalising whoever happens to come to, or is sent to, them for “help” at any given time. But, in turn, whoever does end up being implicated as pathological, and in need of help, will already have been delineated by the wider norms of society – and, more specifically, whom these norms exclude.

Consider, for example, how homosexuality was wrongly medicalised as a mental disorder in the mid-20th Century. Initially, homosexuality ended up being medicalised, in part, because homosexuals (to use the lingo of the time) began going to, or being sent to, their doctors to seek for “help” with their homosexual urges in large numbers. But the reason they were sent, was because society was already homophobic; that is, society had already pathologised being gay as somehow sick, and beyond hegemonic hetero-normativity. Thus, in its medicalisation of homosexuality, institutional psychiatry acted more as a catalyst for these more general social norms, than as its cause – and the same is the case for many other psychiatric classifications.

Bearing the relationship between social norms and psychiatric medicalisation in mind, we might, then, similarly ask which norms led to autism, when disentangled from intellectual disability, being categorised as a distinct kind of human, in need of medical attention, in the first place. In other words, to locate the “cause” of autism arising as a distinct human kind, we need to ask not what its biological underpinnings are, but rather which social norms changed, and in what way, for those we now labelled as being “mildly” autistic or as having “Asperger’s syndrome” beginning to emerge as problematic – something that first happened briefly in Austria in the 1930s, and then again Britain, before the rest of the West, in the 1980s.

Turn, first, to 1930s Austria, where Dr Hans Asperger and colleagues began noticing a newly distinctive kind of person. Notably, it had long been the case for a long time that those autistic persons with more notable disabilities, for example profound intellectual disability, emerged as being problematic – it was just that they were thought of as, say, “feeble-minded” or “schizophrenic” rather than “autistic”. But around this time, and for the first time in history, various boys (they were always boys, back then) began being sent to clinics in the German-speaking world who we might now class as having “Asperger’s syndrome” or “high-functioning autism”.

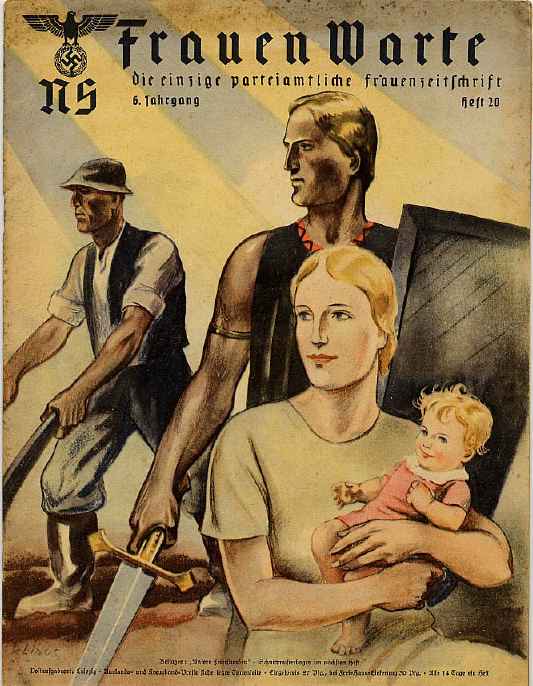

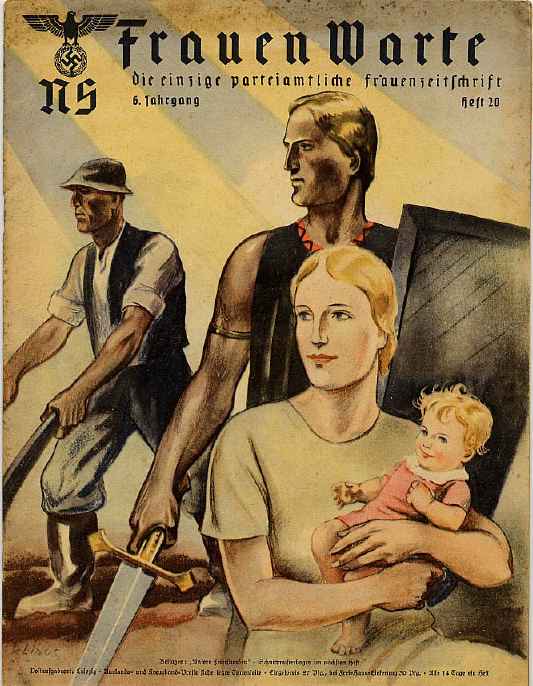

When considering the social causes of the emergence of autism in 1930s Vienna, it is initially significant that it coincided with the rise of Hitler, the Nazi Party, and the German the occupation of Austria. On the one hand, as I have written about previously, the Nazi Party subscribed to a Social-Darwinist ideology that drove them to categorise and attempt to eliminate what they considered abnormal behaviours. This goes part way to explaining why divergent persons were increasingly pathologised. However, this alone doesn’t explain why those specific behaviours we now call autistic ended up being deemed abnormal, and only in boys, whilst other “male” behaviours – gambling, womanising, or lying – were not then seen as problematic.

As it turns, out, though, this may be explained by gender norms in Nazi Germany, which were intertwined with the drive to sterilise and exterminate the cognitively disabled. On the one hand, in Nazi ideology, the key role of men was to contribute to the state, and the key role of women was to reproduce. Thus, for those who were profoundly cognitively disabled, neither men nor women would be seen as fit to fulfil their gender roles, meaning they were exterminated. In turn, though, at a more subtle yet equally pervasive level, Nazi ideology also promoted a hyper-masculinity, whereby manliness was specifically associated with heroic group activities. The ideal traits associated with the “new man” were thus to develop a “soldier mentality”, join brotherly male dominated organisations such as the SS, and fight together in battles. Aside from this, there was also a huge patriarchal pressure for men to marry “hereditary fit” Aryan women, reproduce, and instil Nazi values into their children. Without exhibiting all these traits, males would not be considered “real” men, and would have fallen outside the realms of normality.

This, more than anything else, may account for why those boys who were previously considered “normal” were suddenly showing up everywhere as problematic. Given that those we now label as having “Asperger’s syndrome” are more in line with what we now think of as “geek” culture – solitary, lacking social attunement, and interested in mechanistic or philosophical pursuits – they would have fallen well outside the Nazi ideal of the “new man”. That is to say, they would neither have seemed particularly good at marrying, due to their purported problems in socialising, or falling in with this “solider mentality”, since they tend to be isolated, original thinkers, unlikely to be swept up in crowd madness. In short, as Dr Asperger noted in 1944, his autistic patients tended to ‘follow only their own wishes, interests and spontaneous impulses, without considering restrictions or prescriptions imposed from outside’ – traits which would have made them highly problematic from the inside viewpoint of the Nazi drive towards homogenous, hyper-masculine group mentality.

If this suggestion seems unreasonable, consider how long it took for Asperger’s syndrome to end up being deemed an issue in the UK and the rest of the West. Whilst it was deemed problematic in the German-speaking world, briefly, in the 1930s and 1940s, it didn’t systematically appear as an issue in the rest of the West until the 1980s. Although biologised approaches to autism cannot easily account for this huge gap, one clear social explanation regards how, during the first half of the 20th Century, gender norms in the liberal West were very different from those in Nazi Germany. In fact, the modernist male ideal in the rest of the West was much more in line with those traits we now associated with Asperger’s: being rational, clear, fixed in focus, and lacking empathetic attunement were celebrated in the modernist masculine ideal.

Consider, as Patrick McDonagh has argued, how many heroes and anti-heroes produced by modernist writers (ranging from Beckett to Kafka) can retrospectively be seen to exhibit remarkable similarities to those bodies now labelled as having Asperger’s syndrome. One example is Albert Camus’ “outsider” Meursault, who has been described as a ‘striking depiction of a high-functioning autistic’. This is not just in light of his intense sensory overload under the blazing Algerian sun, but also, as Camus himself described him, his being ‘an outsider to the society in which he lives, wandering on the fringe, on the outskirts of life, solitary, and sensual’. In stark contrast to the hyper-masculinity of Nazi-Germany, these traits were, wrongly or rightly, positively fetishized in men throughout the first half of the 20th Century in much of the modernist West, meaning that they would not have been deemed pathological and in need of medicalisation.

Whilst these traits were celebrated in the modernist era, they increasingly began to show up as problems in the Britain during the 1980s – meaning that something had changed in British social normativity. Interestingly, according to critical psychiatrist Sam Timimi and colleagues, this largely happened in light of the rise of the neo-liberal market system, and in particular the services economy. In particular, this economic shift began to alter the notion of the ideal male: rather than being fixed in focus and obsessive, men increasingly now had to forever shift into new roles and to constantly sell one’s “self” in order to fit in. Members of the workforce, in other words, now had to become increasingly agile, flexed, narcissistic, and hyper-social in order to succeed and be valued – and this economic drive became reflected in social normativity at all levels of society.

Thus, whereas modernist conceptions of masculinity tended to celebrate autistic traits, neoliberal economic ideology began to alter idealised conceptions of masculinity in such a way that takes them to be pathological. Boys who fell outside these norms began showing up at clinics, and suddenly a renewed interest in Hans Asperger’s previously overlooked publications from the 1940s, led by British psychiatrist Lorna Wing, emerged in order to account for this. By the mid-1990s, Asperger syndrome had been added to all the major diagnostic manuals, the “spectrum” had radically broadened, and diagnoses of the condition had skyrocketed.

In both times and places where Asperger’s syndrome came to be seen as a distinctively problematic condition – first, briefly, in Nazi-occupied territory during the 1930s and 1940s, and then again in neo-liberal Britain, Europe, and the United States, from the late 1980s onward – shifting gender norms help account for why the condition began to show up as problematic, and that too in more and more subtle cases. Gender norms, in other words, can account for the “cause” of autism, and the autism “epidemic”, in the only way that notion makes any sense: not as something physical, but rather as something that came into being, and grew, as a distinct social grouping at some point in history.